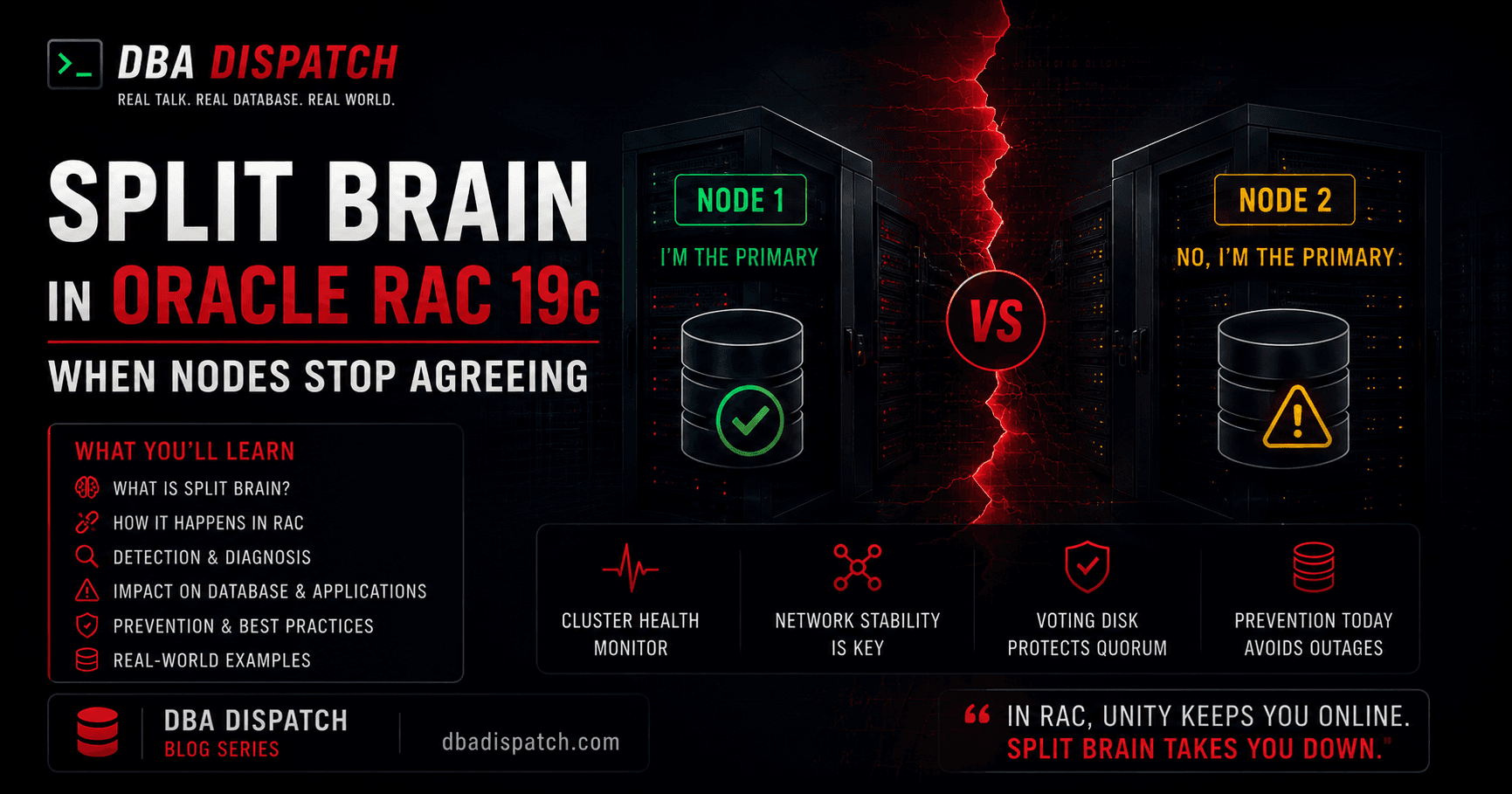

Split Brain in Oracle RAC 19c: When Nodes Stop Agreeing

Tags: Deep Dive · Oracle RAC · 19c · High Availability

Published: May 6, 2026 · 14 min read

Split brain is the silent killer of clustered databases. Nodes that once shared a coherent view of the world suddenly disagree — and each believes it is the sole authority. The consequences range from data corruption to complete cluster collapse.

What Is Split Brain?

In an Oracle RAC 19c cluster, every node must maintain a consistent, shared view of cluster state. This coordination happens through the private interconnect network. A split brain occurs when this interconnect fails — partially or completely — and two or more nodes can no longer communicate with each other.

When isolated from its peers, each surviving node faces an existential question: Am I the one that should keep running, or should I stop and cede control? Without a reliable answer, a naive node might continue modifying shared storage — simultaneously with another node that reached the exact same conclusion.

The result: two nodes both writing to the same datafiles, each unaware of the other's changes. Data structures are shredded. Corruption becomes near-certain.

How Oracle RAC 19c Prevents It

Oracle does not leave cluster membership to chance. RAC 19c employs a layered defense system rooted in the Cluster Synchronization Services (CSS) stack, which is part of Oracle Grid Infrastructure.

1. Voting Disks

The primary weapon against split brain is the voting disk — an odd-numbered set of small files stored on shared storage (ASM, NFS, or block devices). Each node must successfully acquire a majority of votes during cluster operation. If a node loses connectivity to more than half the voting disks, it will immediately fence itself — triggering a node reboot — rather than risk a split-brain condition.

Why odd numbers matter

Voting disks must always be deployed in odd numbers (3, 5, 7…). This ensures a clear majority is always mathematically possible. An even count creates scenarios where neither partition can claim a majority, leading to both halves fencing themselves — a total cluster outage.

2. Node Eviction (STONITH)

When CSS determines a node is unreachable, it initiates node eviction — forcibly removing the suspected node from the cluster. Oracle implements this through the Shoot The Other Node In The Head (STONITH) principle: a suspected node is rebooted via the cluster interconnect or via IPMI/iLO out-of-band management before its instance can corrupt shared data.

3. Network Redundancy

RAC 19c strongly recommends — and in most production deployments mandates — redundant private interconnect networks. Bonded NICs or multiple separate NIC paths for the clusterware heartbeat mean a single cable pull or switch failure does not sever node communication entirely.

The Anatomy of a Split Brain Event

Interconnect failure

A switch fails, a NIC dies, or network congestion causes heartbeat packets to drop. CSS begins counting missed heartbeat cycles.CSS detection window expires

Themisscounttimer expires (default: 30 seconds). CSS declares the remote node as "suspect." The voting disk quorum algorithm kicks in.Quorum decision

Whichever partition holds a majority of voting disk votes survives. The minority partition triggers an immediatereisub-style kernel reboot via thecssdagentwatchdog process.Instance recovery

The surviving node performs crash recovery for all evicted instances, replaying redo logs to bring the database to a consistent state. The evicted node reboots and rejoins the cluster.

Diagnosing a Split Brain Incident

After any unexpected node eviction, your first stop is the CSS and CRSD alert logs. In Oracle Grid Infrastructure 19c, these live under:

$ORACLE_BASE/diag/crs/<hostname>/crs/trace/

├── alert.log # CSS/CRS high-level event log

├── cssd.trc # CSS daemon — heartbeat and voting

├── crsd.trc # CRS daemon — resource state

└── ocssd.trc # OS CSS daemon

Search for telltale patterns that indicate a split brain scenario:

# Voting disk read failure

clssnmvDiskPing: ping to disk 0 failed

# Node eviction initiated

clssscEjectNode: evicting node 2 from cluster

# CSS reconfig — cluster is reforming after eviction

CSS is reconfiguring - a node has left the cluster

# Heartbeat miss counter incrementing

clssscl_NodeMissed: node 2, missed heartbeat count 1..30

OSWatcher is your friend

Oracle OSWatcher (oswbb) captures OS-level network and system statistics at regular intervals. If deployed before the incident, it can show exactly when NIC errors or network saturation began — invaluable for root cause analysis.

Checking Voting Disk Status

$ crsctl query css votedisk

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE a8f34bc12d... (/dev/sdd) [DATA]

2. ONLINE c201ef93a4... (/dev/sde) [DATA]

3. ONLINE 77b9102cd1... (/dev/sdf) [DATA]

Located 3 voting disk(s).

All voting disks should show ONLINE. Any showing OFFLINE reduces your quorum headroom and increases split-brain risk.

Common Root Causes

| Cause | Description |

|---|---|

| Single-path interconnect | No NIC bonding — one cable failure kills the heartbeat entirely. |

| Shared public/private NICs | Business traffic starves heartbeat packets during peak load. |

| Voting disk I/O latency | Storage latency spikes cause missed voting disk reads, triggering false evictions. |

| Firewall misconfiguration | Stateful firewalls dropping UDP heartbeat packets after idle periods. |

| Aggressive misscount tuning | Over-reduced misscount/disktimeout values causing false positives under load. |

| OS kernel hangs | Memory pressure or I/O storms causing the OS to stop processing CSS timers. |

Prevention Best Practices

Infrastructure

- Dedicate the private interconnect. Never share private NIC ports with public or storage traffic.

- Bond your interconnect NICs. Use active-active bonding (LACP/802.3ad) for both throughput and fault tolerance.

- Deploy 3+ voting disks on separate physical spindles or separate ASM failure groups.

- Use a network switch exclusively for RAC interconnect — never route private traffic through the same switch as public traffic.

CSS Tuning

Resist the urge to aggressively lower CSS timeouts. In most cases, the defaults are well-calibrated:

$ crsctl get css misscount

CRS-4678: Successful get misscount 30

$ crsctl get css disktimeout

CRS-4678: Successful get disktimeout 200

$ crsctl get css reboottime

CRS-4678: Successful get reboottime 3

If you must reduce misscount for faster failover, always validate the change under realistic load conditions — the cost of a false eviction almost always exceeds the benefit of shaving a few seconds off detection time.

Monitoring

- Alert on voting disk I/O latency > 100ms — this is the canary in the coal mine.

- Monitor the

alert.logforclssscl_NodeMissedentries, even if they don't lead to eviction. - Track interconnect error counters (

ethtool -S <nic>) for CRC errors and drops. - Use Oracle Enterprise Manager's Cluster Health Monitor (CHM) for continuous OS-level cluster telemetry.

Recovery After a Split Brain Event

If Oracle's CSS handled the split brain correctly, the surviving node will have already performed crash recovery. Your tasks are:

- Verify database consistency via

RMAN VALIDATE DATABASE. - Review alert logs on both nodes to understand the timeline of eviction.

- Inspect OS and network logs on the evicted node for the root interconnect failure.

- Fix the underlying cause before rejoining the evicted node to the cluster.

- Document the incident and calculate RPO/RTO impact.

⚠️ Never manually restart a RAC instance after a split brain

If you suspect the cluster's split-brain resolution did not complete cleanly — or if you are uncertain which node was evicted — do not manually start the database instance. An incorrect startup without CSS arbitration completed is exactly how you get the corruption split brain was designed to prevent. Verify CSS is fully operational first usingcrsctl check css.

Conclusion

Split brain in Oracle RAC 19c is a well-understood failure mode with mature defenses built into the clusterware stack. The voting disk quorum mechanism and CSS node eviction are Oracle's guarantee that a partition will always be resolved deterministically — at the cost of a node reboot rather than data integrity.

The real work happens before any failure: building redundant interconnects, properly sizing voting disk groups, and knowing your CSS timeout parameters cold. An operator who truly understands split brain prevention rarely has to deal with split brain recovery.

Oracle RAC 19c · Grid Infrastructure 19.3+ · Applicable to both on-premise and ExaCS deployments.

Always consult MOS Note 1212703.1 for the latest Oracle-recommended CSS tuning guidance.